Nia AI is a context-aware coding teammate that knows your entire codebase for instant architecture insights, multi-file references, automated code reviews, and Slack collaboration—supercharged by advanced LLM orchestration and agentic AI to boost dev velocity.

Free Options

Launch Team / Built With

Nia

Nia

@john_tans thank you so much! lmk how it goes: https://discord.gg/8M3T3mgn8k

Congratulations on the launch @arlanrakh . Is there something that sets Nia AI apart from these apps (cursor, Trae, Cline)which were already in the market.

Nia

@bellam_saiteja Hey Saiteja, thanks for the congrats!

What really sets Nia AI apart is that it indexes your entire codebase, so it can handle multi-file queries and map dependencies across 100K+ lines of code—something the others can’t do with their limited token windows. Plus, we’re working on an upcoming “Agent Mode” that will automatically flag issues, suggest refactors, and even kick off code reviews and PRs on every commit. Personally, I struggle a lot with code reviews so having a teammate by my side would help a lot:)

Hope this helps. Lmk what you think!

@arlanrakh Great, how are you handling token windows while working with large codebases?

Nia

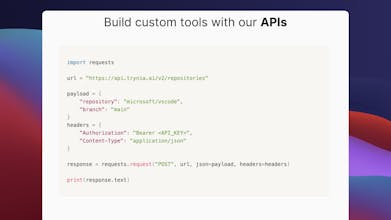

@bellam_saiteja Great question, Saiteja! We tackle token window limitations by dynamically chunking large codebases using chunking + vector DBs . Then, we use a multi-step retrieval pipeline with LangChain/LangGraph with other tools to help us manage very large codebases.

@arlanrakh what's the max context window that you can support? How do you achieve that?

Nia

@chrismessina Great question! We don't rely on a fixed max context window—instead, we dynamically break your codebase into smaller, vectorized chunks stored in a vector db. While the underlying LLM has a token limit (say, 200k tokens for 3.5 sonnet), our approach effectively lets you work with context spanning 100K+ lines of code.

Great product, i would love to use it with the team. One suggestion: include questions asked in Slack responses, it's now obvious to others

which question was asked

Nia

@vladzima Just shipped a new update where Nia recaps the question asked earlier in the thread. It should be live in 5 minutes.

Example:

@Nia AI how does their recommendation algorithm works?

Response from Nia:

Question:

@Nia AI how does their recommendation algorithm works?

Sources:

• twitter/the-algorithm/README.md

• twitter/the-algorithm/follow-recommendations-service/README.md

• twitter/the-algorithm/follow-recommendations-service/README.md

• twitter/the-algorithm/product-mixer/core/src/main/scala/com/twitter/product_mixer/core/pipeline/recommendation/BUILD

• twitter/the-algorithm/follow-recommendations-service/server/src/main/scala/com/twitter/follow_recommendations/flows/post_nux_ml/BUILD

• twitter/the-algorithm/follow-recommendations-service/common/src/main/scala/com/twitter/follow_recommendations/common/base/RecommendationFlow.scala

• twitter/the-algorithm/follow-recommendations-service/README.md

• twitter/the-algorithm/src/scala/com/twitter/recos/user_tweet_entity_graph/RecommendationHandler.scala

• twitter/the-algorithm/src/scala/com/twitter/recos/user_user_graph/RecommendUsersHandler.scala

• twitter/the-algorithm/follow-recommendations-service/thrift/src/main/thrift/recommendations.thriftBased on the repository code, I'll explain how Twitter's recommendation algorithm works, primarily focusing on the Follow Recommendations Service (FRS) as it's one of the main components detailed in the codebase.From the main README.md and follow-recommendations-service documentation, here's how the recommendation system works:1. Overall Architecture

The recommendation algorithm is responsible for serving feeds of Tweets and other content across all Twitter product surfaces (e.g., For You Timeline, Search, Explore, Notifications).2. Follow Recommendations Process

The Follow Recommendations Service (FRS) works through several key steps:a) Candidate Generation

- Located in follow-recommendations-service/common/src/main/scala/com/twitter/follow_recommendations/common/candidate_sources/

- Uses various user signals and algorithms to identify potential accounts to recommend

- Includes methods like:

- Triangular loops

- Two-hop random walk

- User-user graph analysisb) Filtering

- Located in follow-recommendations-service/common/src/main/scala/com/twitter/follow_recommendations/common/predicates

- Applies filtering logic to improve quality and health

- Happens both before and after ranking

- Heavier filtering (higher latency operations) typically occurs post-rankingc) Ranking

- Uses both Machine Learning (ML) and heuristic rule-based ranking

- Process:

1. ML features are fetched (feature hydration)

2. Creates DataRecord for each <user, candidate> pair

3. Sends pairs to ML prediction service

4. Returns prediction score based on:

- Probability of follow given recommendation

- Probability of positive engagement after follow

5. Uses these scores to rank candidatesd) Transform

- Performs necessary transformations on candidates:

- Deduplication

- Attaches social proof (e.g., "followed by XX user")

- Adds tracking tokense) Truncation

- Final step that trims the candidate pool to a specified size

- Ensures only the most relevant candidates are presented3. Social Proof and Recommendations

From the RecommendationHandler.scala, we can see that the system also handles:

- Tweet recommendations

- Social proof hydration

- Different types of recommendations (tweets, hashtags, URLs)The system is highly customizable per use case, with different display locations having their own specific flows and configurations. This allows Twitter to optimize recommendations based on where and how they're being displayed in the product.This is a high-level overview based on the provided repository code. The actual implementation involves many more intricate details and specialized algorithms for different recommendation scenarios.

@arlanrakh Awesome!